TL;DR

- AI detectors do not actually 'detect' AI; they calculate the statistical probability of your word choices based on LLM training data.

- Perplexity measures how predictable your vocabulary is. Low perplexity = AI. High perplexity = Human.

- Burstiness measures sentence length variation. Uniform sentences = AI. Chaotic, varied sentences = Human.

- Watermarking (injecting hidden cryptographic signals into AI output) is the future, but current detectors rely entirely on flawed statistical classifiers.

- Because detectors penalize 'predictable' formal writing, they are mathematically destined to falsely flag human academic and technical writers.

The term 'AI Detector' is a brilliant marketing lie. These tools do not possess a magical scanner that sees a ChatGPT watermark. They are simply statistical classifiers. They look at a piece of text and ask: 'How likely is it that an algorithm would choose these exact words in this exact order?'

The Two Pillars of Detection: Perplexity and Burstiness

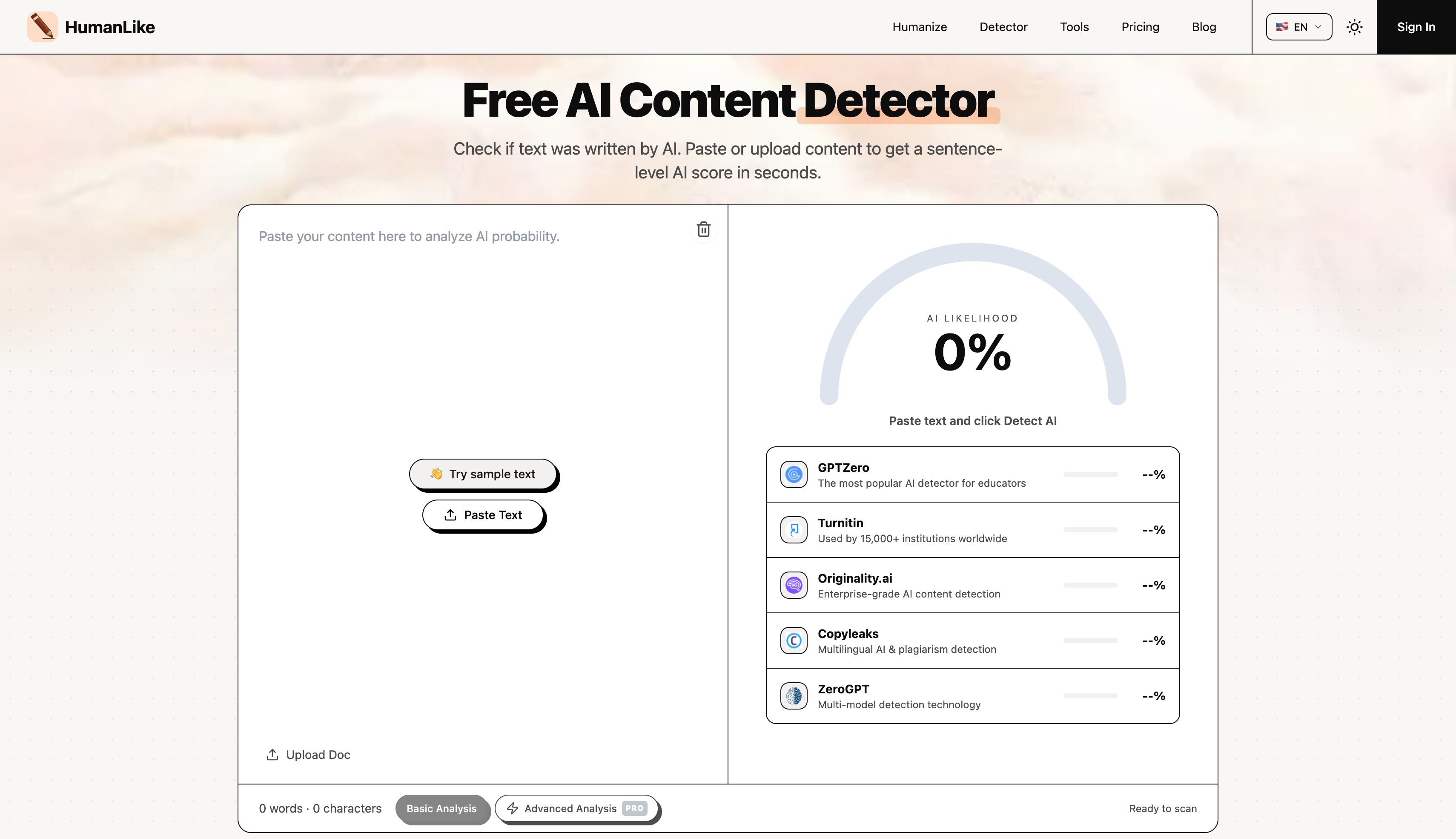

Every major detector on the market — Turnitin, GPTZero, Originality — bases its core algorithm on two primary NLP (Natural Language Processing) metrics.

1. Perplexity (The Vocabulary Predictability)

Large Language Models operate using a Softmax function, meaning they calculate the probability of the next word and usually pick the most likely one. If the sentence is 'I am going to drink a glass of...', the LLM picks 'water'. If a detector sees 'water', it assigns a low perplexity score (Highly Predictable = AI). If a human writes 'I am going to drink a glass of liquid courage', the detector assigns a high perplexity score (Unpredictable = Human).

2. Burstiness (The Structural Chaos)

Humans are chaotic writers. We write a massive, winding, 40-word run-on sentence. Then we stop. And write a three-word fragment. This variation is called 'Burstiness'. AI models are trained to be readable and helpful, so they output paragraphs where every single sentence is beautifully balanced at exactly 15-20 words. Detectors scan the structural variance. Perfect symmetry gets flagged as AI.

| Metric | AI Behavior | Human Behavior | Detector Logic |

|---|

| Perplexity | Picks highly probable words | Uses slang, quirks, rare words | Low Perplexity = AI |

| Burstiness | Uniform sentence lengths | Chaotic, varied sentence lengths | Low Burstiness = AI |

Companies like Turnitin train their detectors using massive datasets. They feed a neural network 10 million human essays (written before 2021) and 10 million ChatGPT essays. The neural network learns the subtle footprint of the LLM. It notices that AI loves words like 'delve', 'tapestry', and 'testament'. It notices that AI almost always uses transitional adverbs like 'Furthermore' at the start of paragraphs.

📊The University of Maryland Impossibility Theorem

A landmark UMD study proved that as LLMs become more advanced, the statistical distribution of their output perfectly overlaps with human output. Conclusion: Reliable AI detection is mathematically impossible in the long term.

Because statistical detection is failing, OpenAI and Google are working on 'Watermarking'. This means tweaking the LLM so it subtly selects specific, mathematically identifiable patterns of tokens that a decoder can recognize. If this becomes industry standard, basic spinners will fail instantly. Only deep-reconstruction humanizers (like HumanLike.pro) that completely destroy and rebuild the token chain will bypass it.

If you understand how detectors work, you understand why tools like QuillBot fail. You cannot trick a perplexity algorithm just by swapping 'happy' for 'joyful'. You have to attack the math.

HumanLike.pro is engineered to solve the exact equations detectors look for. It forces the output model to intentionally select lower-probability tokens (spiking perplexity) and aggressively splices and merges sentences (spiking burstiness). It doesn't just hide the AI; it mathematically transforms the text into human data.

Our Verdict

The Reality of Detection Technology

- AI detectors are flawed statistical probability engines. They punish clear, formal human writing and create disastrous false positives. Until the industry moves away from them, your only defense is understanding their mathematical limitations and using tools like HumanLike.pro to alter your text's perplexity footprint.

I built HumanLike.pro by reverse-engineering these exact detection metrics.